Book Project: Understanding Immigrants’ Racial Attitudes

Race and immigration have been deeply intertwined throughout American history. In this project, I ask how immigrants from four different racial and ethnic groups see themselves within American racialized hierarchies, especially in relation to native-born Black Americans. Drawing on nationally representative surveys such as the ANES, the CCES, and the GSS, as well as multilpe original surveys of immigrants, I find that immigrants of all four groups have more negative attitudes toward Black Americans than do their native-born co-ethnics. These differences cannot be explained by differences in demographics, partisanship, social desirability bias, or by increased contact with Black Americans.

I argue that differences in racial attitudes are the result of immigrants’ increased optimism about the United States. Using a survey measure of US and native country optimism, I find that immigrants are substantially more optimistic about the US than are their native-born co-ethnics. These differences in optimism mediate native-immigrant differences in racial resentment and other negative attitudes toward Black Americans. Immigrants, who affirmatively chose the US as their home, experience substantially more cognitive dissonance between their very positive US attitudes and the realities of racial inequality than do native-borns, who did not make this choice. As a result, immigrants feel more pressure to reduce this dissonance through derogation of Black Americans.

Some of my early findings from this project are available in the form of a working paper. This project has been funded by RSF Grant G-2107-33264. The survey funded by this grant is available here.

Selected Other Projects:

Indentured Citizens: How Student Loan Debt Shapes Americans' Attitudes Toward Inequality

With rising costs of college, more and more Americans have taken out loans to pay for their education. With average repayment periods lasting 20 years or longer, this paper asks: how does student loan debt shape Americans' political attitudes? Using a combination of publicly available and original surveys, I find that student loan debtors have more egalitarian attitudes toward racial and economic inequality, lower social trust, and a decreased belief that society is fair and just. These effects persist for even for borrowers who have repaid their student loans, and are not explained by differences in demographics, partisanship, parental socioeconomic status, or college experience. These findings suggest that the student loan system is a substantial contributor to the political socialization of college-educated adults in the United States.

Working paper coming soon

Fear and Loathing in St. Louis: Gun Purchase Behavior as Backlash to Black Lives Matter Protests

(with Elad Yom-Tov and David Rothschild)

How do Black civil rights protests affect Americans' gun ownership decisions? Using a novel dataset of gun-related web searches in combination with geocoded protest data, we examine the effects of the 2020 Black Lives Matter protests on Americans' intent to purchase firearms. We find a clear relationship between geographic proximity to BLM protests and firearm purchase web searches, but a null relationship between these searches and proximity to re-opening protests. We then examine racial attitudes of would-be gun buyers using users' web search histories and find that racially conservative searchers had significantly larger spikes in gun purchase interest during the 2020 BLM protests than did other comparable searchers. These results suggest that Black civil rights protests can serve as a catalyst for gun purchases among racially conservative Americans.

Anti-Immigrant Rhetoric and ICE Reporting Interest: Evidence from a Large-Scale Study of Web Search Data

(with Shawndra Hill and David Rothschild)

This paper studies whether media cues can motivate Americans to report suspected unauthorized immigrants to Immigration and Customs Enforcement (ICE). Using Google Trends data, a novel dataset of immigration-related Bing web searches, and automated content analysis of cable news transcripts, we examine the role of post-2016 media coverage on searches for information about how to report immigrants to ICE, as well as searches about immigrants related to crime and welfare dependency. We find significant and persistent increases in news segments on crime by immigrants and their use of public services after Trump's inauguration, accompanied by a sharp increase in searches for reporting immigrants. We find a strong and consistent association between daily reporting searches and immigration and crime coverage, as well as with media fear cues about immigrants. Using timestamped searches during broadcasts of Trump's and Obama's speeches, we isolate the specific effect of anti-immigrant media coverage on searches for how to report immigrants to ICE. The findings indicate that media's choices regarding the coverage of immigrants can have a strong impact on the public's willingness to engage in behavior that directly harms immigrants.

From Anti-China Rhetoric to Anti-Asian Behavior: The Social and Economic Cost of “Kung Flu”

(With Justin Huang, David Rothschild, and Julia Lee Cunningham)

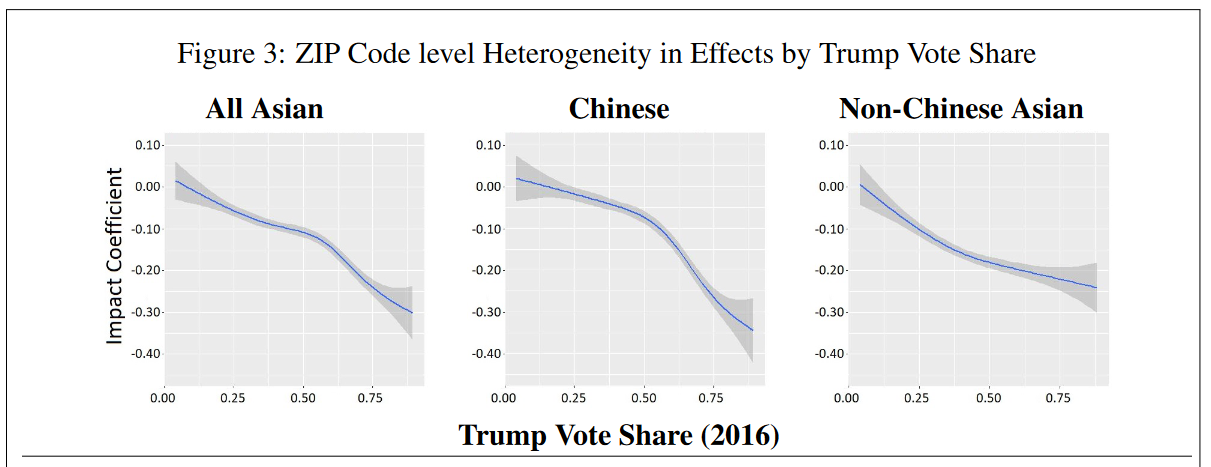

Discrimination and violence directed towards Asian Americans in the United States increased dramatically following the onset of the COVID-19 pandemic. In this paper, we examine consumer discrimination against businesses associated with Asian Americans. Leveraging the pandemic as an exogenous shock to Americans' level of anti-Chinese sentiment, we utilize a series of analyses combining survey data, online search trends, and consumer cellular device mobility data to measure the effects of this shock on consumer discrimination against Chinese and other Asian restaurants. Survey and search data show that attitudes towards Chinese and non-Chinese Asian food declined precipitously during the pandemic, and this change in attitudes was driven by a mix of assigning blame for COVID-19 spread to Asians and experiencing fear of Chinese food. Analysis of cellular phone mobility data shows Asian restaurants suffered a 18.4\% drop (95\% C.I.: -15.9\% to -20.8\%) in traffic relative to non-Asian restaurants in the pandemic period. We explore heterogeneity in these effects by political affiliation and find strong correlation between support of former President Trump and avoidance of Asian restaurants. The results are consistent with the role of out-group homogeneity and ethnic misidentification as drivers of spillover effects of anti-Chinese sentiment on non-Chinese Asian restaurant traffic. This work documents some of the unique economic challenges faced by Asian Americans during the COVID-19 pandemic and has substantial implications for the study of consumer discrimination and stigmatization in public health communications.

Do Partisans Make Different Investment Decisions When Their Party is in Power?

(With Shawndra Hill and David Rothschild)

Partisans' stated beliefs about the economy vary dramatically depending on the party that holds the presidency. Do these responses represent genuine differences in beliefs about the economy, or do they reflect partisans' expressive reporting on surveys? To answer this question, we rely on a novel dataset of Bing searches related to housing, automobiles, and stock market purchases by partisans from February 2016 to July 2017, as well as a dataset of personal vehicle registrations from the Department of Motor Vehicles (DMV) in New York State. We find that in the aftermath of the 2016 election, Democrats, as members of the losing party, were less likely to search for both house and car purchase terms. Furthermore, we find that Republican ZIP codes experienced a greater increase in car registrations in 2017 than Democratic ZIP codes. This statistically significant and meaningful shift in investment behavior suggests that partisans' survey responses are actually due to different beliefs about the economy, rather than just expressive reporting.

Does Partisanship Affect Compliance with Government Recommendations?

This article studies the role of partisanship in American's willingness to follow government recommendations. I combine survey and behavioral data to examine partisans' vaccination rates during the Bush and Obama administrations. I find that presidential co-partisans are more likely to believe that vaccines are safe and more likely to vaccinate themselves and their children than presidential out-partisans. Depending on the vaccine, presidential co-partisans are 4-10 percentage points more likely to vaccinate than presidential out-partisans. This effect is not the result of differences in partisan media coverage of vaccine safety, but rather in differing levels of trust in government. This finding sheds light on the far-reaching role of partisanship in Americans' interactions with the federal government.